How I built MagistrOS the intelligent teacher assistant for classroom

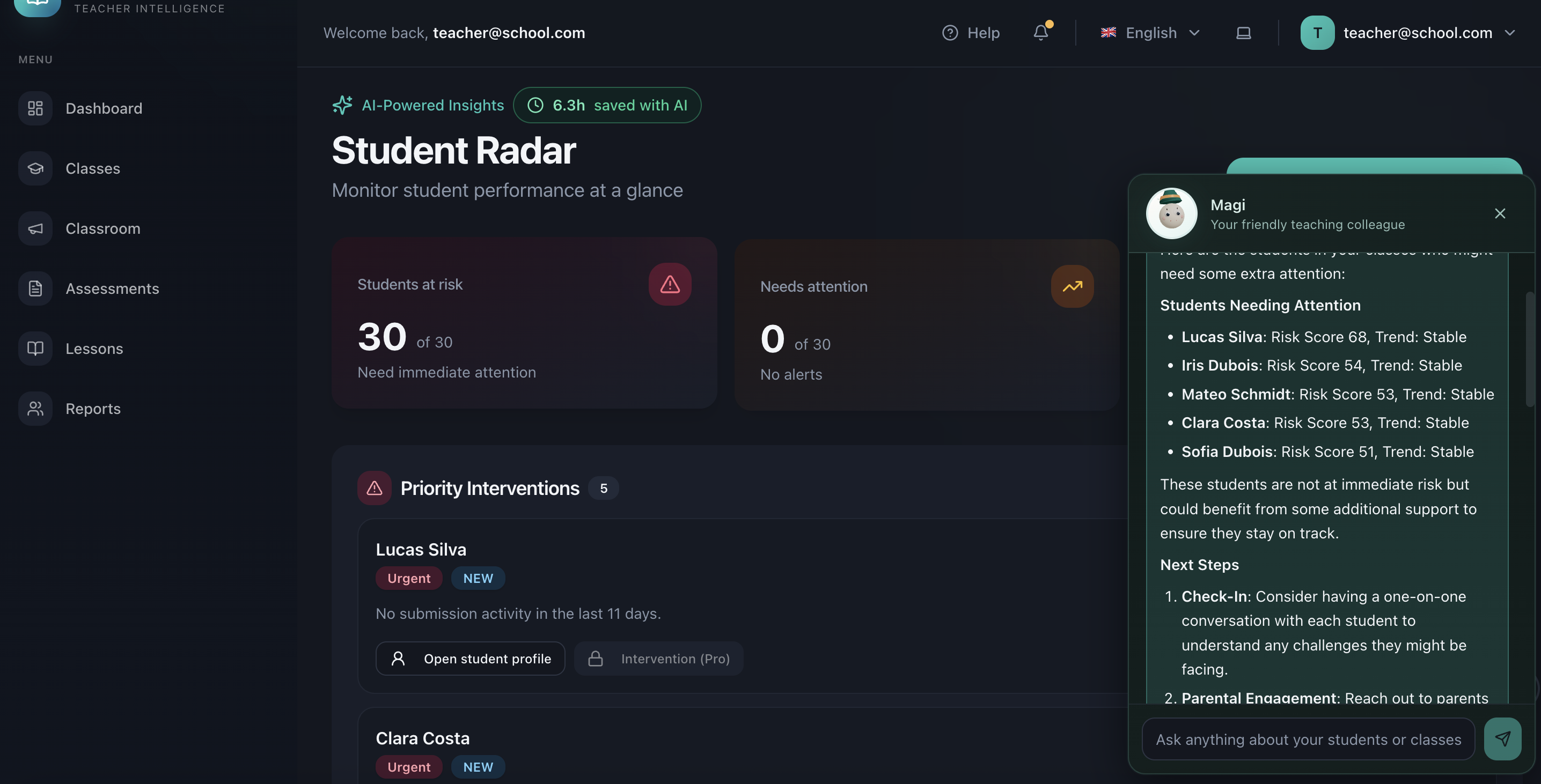

MagistrOS, MagistrOS app, is my most ambitious project so far. It helps teachers spot early warning signs in their classrooms, like students slipping behind. It connects to Google Classroom to import data, and it is fully GDPR-compliant: data stays in the EU, and student data is never exposed.

It can create lessons and assignments from URLs, PDFs, or plain text, while keeping student data inside the classroom context.

At the core of MagistrOS is Magi, a smart chatbot that can answer questions about classroom data, draft parent reports based on grounded real data, and even add behavioral logs for students.

I built MagistrOS because I wanted to learn what RAG really is and how it works behind the scenes. Wrappers hallucinate, and when it comes to sensitive K-12 data, doing things properly is critical. I also run QuizGeniusAI, which has grown organically to 650 users (many of them teachers), so I wanted to extend that concept beyond simple quizzes.

I used two main skills for this project, rag-engineer and rag-implementation. I wanted to compare how they performed during planning vs. execution, and also see whether Codex or Claude Code handled RAG better.

I started planning with Gemini. I ran research on Google Classroom: weaknesses, teacher complaints, countries with highest usage (USA, UK, Sweden, Indonesia), platform evolution, and competitor strategy. After that, I ran my own “complaint crawler,” an app I built to search the web and Reddit for complaints and discussions on specific topics.

Once I had the initial concept and the main three features, I moved to Codex in plan mode. I pasted the full Gemini conversation and asked Codex to challenge it with fresh research. Codex performed very well in research and produced a complete plan, including the RAG system. Then, in a separate chat, I used the rag-implementation skill to review that plan, especially the RAG section. I finally pasted the plan in to Claude Code for the last iterations and implementation.

Codex handled most of the UI work. The frontend-design skill was strong, and I explicitly asked not to use shadcn. The layout turned out well. Claude Code handled the initial RAG implementation, including a mock seeding system so I could always test with data.

Main RAG Issues I Had to Fix

-

Wrong voice for the real user

Early outputs sounded like tooling docs for engineers, not guidance for teachers. I had to rewrite prompts and clean up i18n strings so responses were plain, practical, and classroom-friendly. -

Low-evidence behavior was unstable

When retrieval confidence dropped, answers were either generic or too weak to be useful. This was the hardest part. I moved high-stakes flows to strict grounding, so the assistant now says what is missing instead of guessing. -

Retrieval quality needed heavy tuning

Dense search alone missed obvious context. Lower thresholds improved recall but also introduced noisy matches. I had to tune thresholds, add hybrid retrieval (semantic + lexical), and reranking before results became consistent. -

Debugging was blind at first

Without clear retrieval diagnostics, every bad answer looked the same. Adding retrieval-quality logs and claim/retrieval audits made it possible to separate data gaps from prompt issues and fix the right layer.

What looked like “just tuning RAG” became a full trust pipeline: voice, retrieval, evidence validation, and safe fallback behavior all had to work together.

The Bigger Problem: Trust

The main issue in this app is the same issue I had in previous apps: trust. Teachers rarely trust an app they do not know, especially when it asks to import classroom data. Google is already under scrutiny for student login and data concerns, so the bar is high.

I ran a full review with Perplexity on the landing page and the core concept. After several iterations, I implemented these changes:

- I built a mock-data demo so teachers can see how the app works before connecting anything.

- I ask for minimal permissions at login, then request broader permissions only when the teacher decides to connect.

- I built an IT page showing exactly what data is used and how it is handled.

According to my own brain intelligence (no AI needed), apps like this can be killed by Google with one small move. So I planned MagistrOS not to depend entirely on Classroom. For example, assignments can be created and shared in Classroom, but also outside of it.

One strong product idea came from Claude (app, not code): teachers can generate server-side PIN codes that map to students names (also verified server side, never exposed), so students do not need to log in or expose personal data. The PIN codes use fun, child-friendly names, which turned out to be a smart UX choice.

I think MagistrOS has real potential, but like with most of my apps, I still struggle with promotion. No social media and no marketing expertise are my biggest limits.

Anyway, this was a very fun project. I learned a lot, not just about RAG, but also about privacy, handling sensitive data, and difficult marketing.

Even more fun: writing this while seeing Kimi and Charles both on the podium. That early wake-up was worth it.